BIO

Logan Goldie-Scot is a VP of Research and Impact at Generate Capital, responsible for building and communicating the firm’s information advantage. He is focused on developing proprietary insights relating to Generate’s six core sectors, while supporting market-wide coverage and origination efforts.

Prior to Generate, Logan joined BloombergNEF in 2010 and was Head of Clean Power research when he left in 2022. This was a 30-person group spanning solar, wind, energy storage and power grids. At BloombergNEF he previously worked as a solar analyst, built and led the Energy Storage team, and developed the firm’s first clean energy Index and ETF, in collaboration with Goldman Sachs. Logan is a regular writer, speaker and conference panellist on topics relating to the energy transition. He has an MA (Hons) in Arabic from Edinburgh University and in 2019 completed executive training in Supply Chain Management at Stanford GSB.

Domain expertise, blurred lines, license to scale

Moratoriums, rule making, utility engagement

Our favorite articles and reports from the last month

Rate limit exceeded

Just over four years after the “ChatGPT moment”, February 2026 marked a new inflection point for how many of my peers and I use AI. The constraints switched from model limitations (both perceived and real) and perhaps a lack of creativity and prioritization, to token and inference constraints: “Rate Limit Exceeded”, “A bit longer, thanks for your patience.” We saw this breakthrough in coding first but only now has the potential become clear for many non-coders: AI has transformed from a glorified search bar or an experimental side project into a valuable tool embedded into workflows. One Useful Thing has a good summary of the shift, as does Howard Marks in AI Hurtles Ahead. This personal conversion is happening against a backdrop of persistent, structural and well documented challenges. Dan Wang’s 2025 letter thoughtfully frames the importance of successfully overcoming these.

Rather than “winning the AI race,” I prefer to say that the US and China need to “win the AI future.” There is no race with a clear end point or a shiny medal for first place. Winning the future is the more appropriately capacious term that incorporates the agenda to build good reasoning models as well as the effort to diffuse it across society. For the US to come ahead on AI, it should build more power, revive its manufacturing base, and figure out how to make companies and workers make use of this technology. Otherwise China might do better when compute is no longer the main bottleneck.

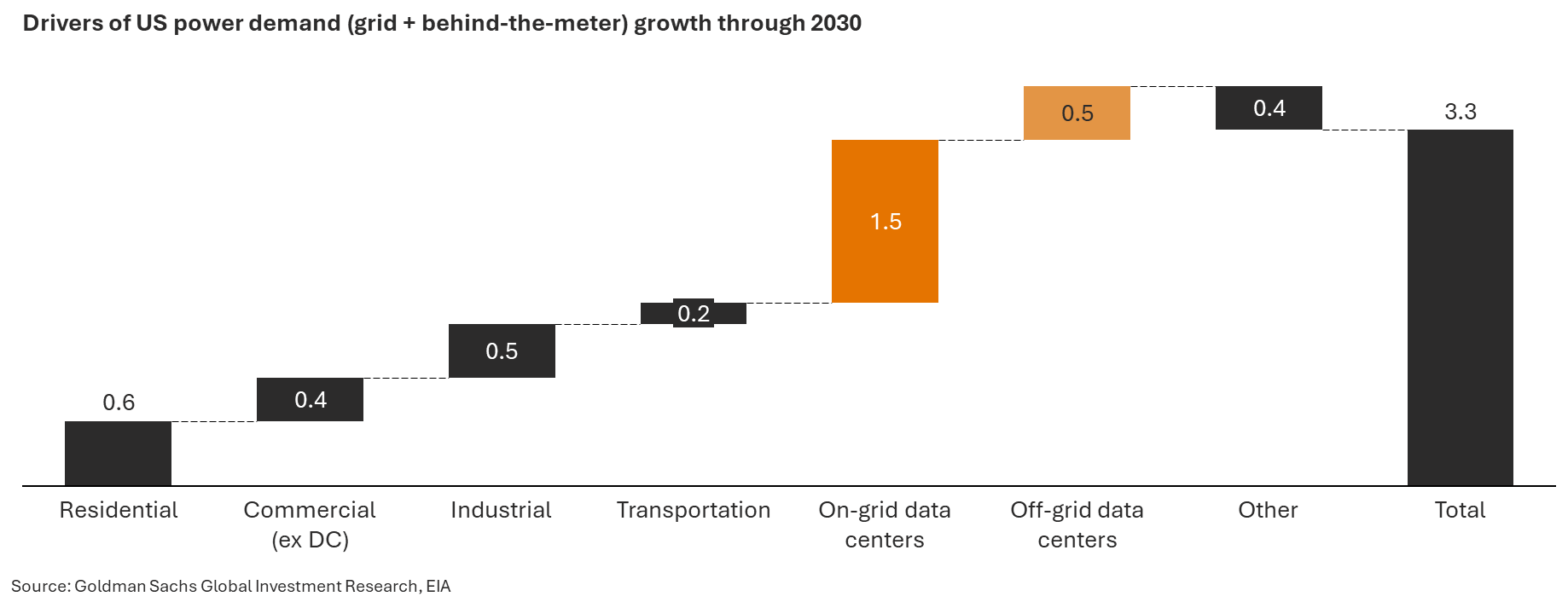

Wang’s prompt to ‘build more power’ is easy to state but notoriously difficult to execute. Powering a data center now requires simultaneous execution across three domains that each demand deep, specialized expertise: the grid and interconnection, on-site generation, and policy and regulation. And while AI has come in strides, the limits to scaling compute quickly are intransigent. They are physical, geographic, and increasingly personal.

Domain experience

Within the storm of reactions to sell-offs in software-as-a-service companies last month, a quote from Steven Sinofsky resonated, “Domain experience will be wildly more important than it is today because every domain will become vastly more sophisticated than it is now.”(Death of Software. Nah.)

This is the lens through which to view both the physical challenges and the solutions to data center build. Power is the #1 constraint to data center delivery, although community opposition, water concerns, construction labor and long equipment lead times are overlapping and material challenges. Power systems have always been complex but in this environment, seasoned operators have a distinct advantage. They benefit from hard-won lessons, but they also understand that power development remains one where to human involvement is intrinsic to a service or product’s value (h/t Séb Krier Ghosts of Electricity).

Societal engagement

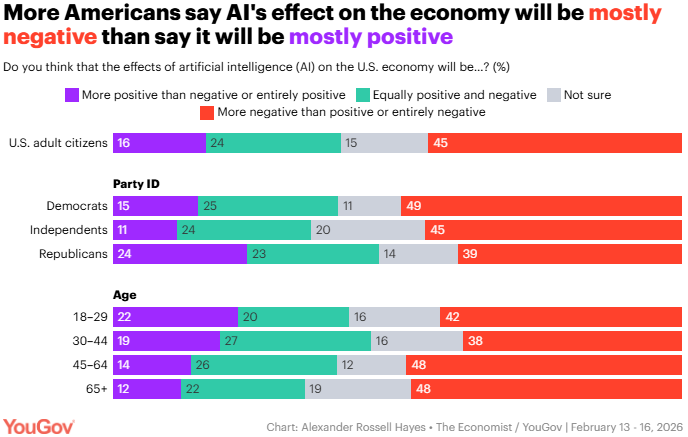

Community opposition to infrastructure projects is nothing new. Indeed, by the end of 2025, 24% of counties nationwide had some impediment to new utility-scale wind and solar energy, up from as few as 15% two years earlier (USA Today). However, data centers represent the most tangible, physical manifestation of AI to the general public and developers and AI is not widely popular: more Americans say AI’s effect on the economy will be mostly negative than say it will be mostly positive (YouGov). These societal impacts, along with rising anxiety over rising electricity bills, preferential tax treatment and water availability now compound prior concerns tied to large infrastructure projects such as land use and air quality.

Site-specific

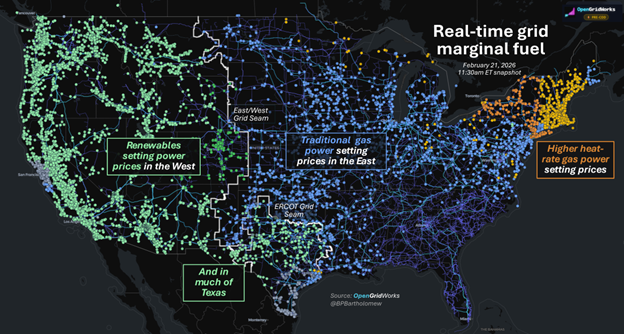

As maps of U.S. interconnections illustrate, the pathway to scale varies wildly by geography. The country is a hodgepodge of regional fuel mixes, fractured regulations, and divergent power prices. On February 21 for instance renewables set power prices in the west and ERCOT, and natural gas heat rate math drove prices in the east (The Merit Order). As you zoom in on specific areas, differences often become starker. Energizing new load, whether using behind-the-meter or via a grid connection, more closely resembles services as opposed to manufacturing: it requires a lot of situation-specific adaptation as opposed to a high-volume, highly repetitive process (analogy poached from a fascinating Construction Physics article on “The AWS for Everything“).

Front- and behind-the-meter

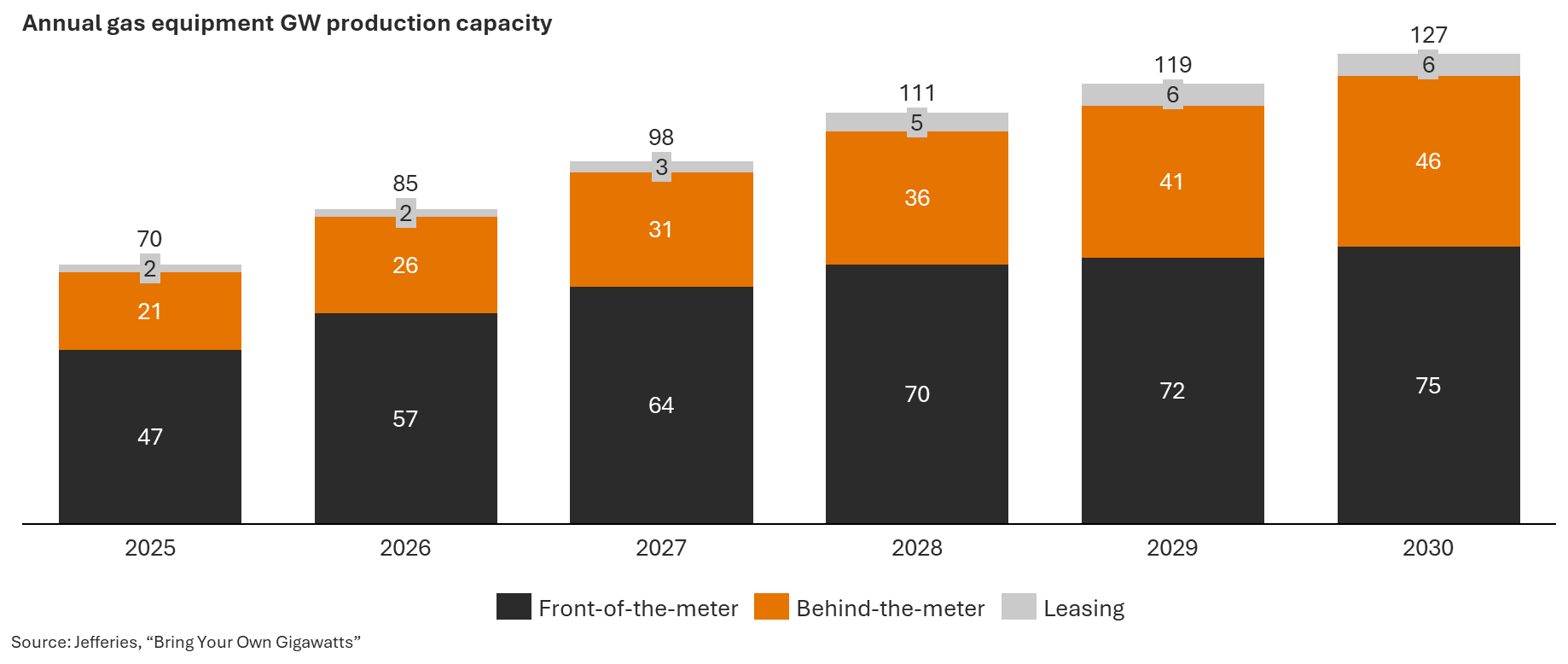

One reason for the rapid emergence of BTM is that gas generation equipment is no longer as much of a constraint, as behind-the-meter providers reallocate and ramp up capacity and as the market proves the adage that necessity is the mother of invention: “the engines that once powered the retired aircraft could add up to as much as 40,000 MW of electricity generating capacity, or about 10% more than the current generation capacity of Arizona.” (EIA).

In the same way data center demand opened the door for new behind-the-meter gas deployments, it seems likely to spur uptake of other distributed solutions. As WeaveGrid CEO summed up recently, there’s been “a sea change in how aware utilities are that, building my way out is not going to happen; burning my way out is not going to happen.” (Heatmap)

Policy notes

Proposed moratoriums underscore the need for more thoughtful societal engagement

The only common ground between the President’s State of the Union and the Democratic response was an effort to force large Hyperscalers to pay for the costs to the grid of building the power infrastructure to support data centers. That’s a response to polls showing not just significant opposition to data center development in communities across the country, but underlying skepticism of Americans to the core technology.

Traditional concerns tied to large infrastructure projects such as land use and air quality apply here, but they are now compounded by anxiety over rising electricity bills, preferential tax treatment, water availability, and broader questions about the societal impacts of the technologies these facilities enable.

Several hyperscalers and developers have stepped away from projects amid sustained community resistance. States introduced some 1,200+ AI-related bills in 2025 across more than twenty topics. Of these, 874 bills remain active or under consideration, with about 40% focused on private-sector use, indicating broad and sustained state activism (Jefferies). In particular, all top five states with the largest number of data centers have introduced or enacted some restrictions on building data centers. Moratorium bills have been introduced in Virginia, Georgia, Oklahoma, among others, with additional action at the municipal level. A federal ban has also been proposed, though it is unlikely to advance, and the Trump administration has spent the month drafting a compact for major tech companies and data center developers to sign pledging not to raise electricity bills or otherwise burden average Americans.

Moratoriums are blunt instruments. They do not resolve the nuanced cost allocation, grid planning, and resource management questions at the heart of charting a path forward. But the introduction of sweeping legislative tools signals something important: policymakers feel urgency to demonstrate responsiveness to public sentiment.

The biggest risk for data centers is to assume that development will proceed without additional requirements or constraints. The biggest opportunity in this moment is engaging society and advancing policies that offer tangible value to local communities.

Complexity continues to define large load rule development

The rulemakings underway at wholesale markets continue to demonstrate the complexity of creating rules for integrating large loads.

As noted last month, a defining challenge of this quarter is the regulatory patchwork across overlapping authorities. While the Administration pushes for self-sufficiency, implementation falls to a layered network of grid operators, state PUCs, and utilities.

ERCOT, which sits outside of the purview of FERC, is grappling with how to move quickly while also developing durable rules. ERCOT is introducing a Batch Study process to clear the load interconnection queue, and a primary topic is which projects will be included in a single transitional “Batch Zero” later this year. At the same time, major rulemakings to implement SB6 are advancing at the PUCT to create rules for co-location with existing generation and new interconnection standards. Enabling “Bring Your Own Generation” constructs and “Controllable Load Resources” are of interest to stakeholders. While much is in flux, the direction is clear: more stringent eligibility screens, clearer technical requirements, and stronger operational commitments. Early, independent technical diligence around transmission exposure, operability, and phasing will become essential.

PJM is moving just as quickly, implementing Board directed reforms that include a reliability backstop procurement construct, expedited and curtailable interconnection tracks, revised behind the meter tariffs, and new transmission service options. Proposals continue to emphasize that implementation will require coordination with states that regulate retail sales within their borders. Utilities will ultimately be responsible for ensuring that large loads pay and implementing any load curtailment. Questions remain about how backstop procurement interacts with BYOG models and whether large loads risk double paying for capacity.

Overlaying these regional efforts, NERC’s Large Loads Task Force and recent Emerging Large Loads Technical Conference signal that reliability expectations for large loads are being refined quickly. NERC has identified gaps in how emerging large loads are studied, modeled, and integrated into planning and operations. This work could drive updates to Reliability Standards and registration criteria, and ultimately also shape state, ISO and FERC-led reforms.

Utility engagement can unlock near-term opportunities

While market level rules continue to evolve, data centers are making faster progress by working directly with utilities.

In conversations at the annual NARUC Winter Summit, regulators emphasized that rate design must balance affordability with new demand growth, and customers shared their perspectives on keeping electricity costs down. If costs and risks are clearly assigned, policymakers acknowledge that large loads can put downward pressure on rates.

Forward leaning utilities are not waiting for multi-year rate cases to tackle this topic. Some are developing new large load tariffs or special contracts to enable expedited service. Bespoke tariffs in Indiana, Arizona, and Nevada were highlighted, emphasizing a distinction from the traditional economic development rate discounts historically associated with “Special Contracts”. Instead, special contracts are being pursued in order to ensure appropriate cost allocation outside of general rate cases, reflecting a shift toward more explicit cost responsibility for large loads and the need for urgency.

Google’s recent announcements illustrate the model: funding transmission under Xcel’s Clean Energy Accelerate Charge, long-duration storage with Form Energy, and leveraging Nevada’s Clean Transition Tariff for geothermal procurement. In practice, utility engagement is unlocking near-term deployment even as broader market frameworks are still being written.

What we're reading

Death of Software. Nah (Steven Sinofsky). “AI changes what we build and who builds it, but not how much needs to be built. We need vastly more software, not less.”

Thin Is In (Statechery). “Open-ended text prompts in particular are a terrible replacement for a well-considered UI button that both prompts the right action and ensures the right thing happens. That’s why it’s the agent space that will be the one to watch: what workflows will transition from UI to AI, and thus from a thick client architecture to a thin one?”

2025 letter (Dan Wang). “It’s nearly as dangerous to tweet a joke about a top VC as it is to make a joke about a member of the Central Committee.”

Guggenheim’s 2025 Infrastructure Review. Demographics, Debt, Deglobalization, and have flipped from headtailwinds to headwinds. From drivers of growth to systematic risks.

Data Center Flexibility and Generation Capacity Over the Next Decade (Nicholas Institute for Energy, Environment and Sustainability). Some estimates on degrees of flexibility and the implocations for investments, costs and generation.

Roundabouts save lives but are they socialist? (Car Charts)

Lots on tariffs but Adam Tooze’s Chartbook 434 stood out, as did Top Links 1016, citing the FT. “The close to 100 filings (for tariff protection by US firms) underscore the broad range of items that companies are now arguing pose a national security risk to the US. One company argues in its filing that “without bread, buns, baguettes, crusty rolls, cakes, muffins and the like”, soldiers in the US military “will not be able to maintain a healthy diet”.”

Reframing Energy for the Age of Electricity (Ember). “There are four battles in the energy system and electrotech is set to win three of them.”

Demand Efficiency M&V (WattCarbon). “Demand efficiency” has superseded “energy efficiency” as the prime directive of demand-side energy policy.

The Utility Business Model is Built for a Different Era. Regulators are Starting to Notice. Michael Lee. “From a first principle, utilities sell trust. They happen to also sell electricity and build infrastructure, but they principally sell trust.”

More newsletters

February 2026 Newsletter

Just over four years after the "ChatGPT moment", February 2026 marked a new inflection point for how many of my peers and I use AI. The constraints switched from model limitations (both perceived and real) and perhaps a lack of creativity and prioritization, to token and inference constraints: "Rate Limit Exceeded", "A bit longer, thanks for your patience." Powering a data center now requires simultaneous execution across three domains that each demand deep, specialized expertise: the grid and interconnection, on-site generation, and policy and regulation. And while AI has come in strides, the limits to scaling compute quickly are intransigent. They are physical, geographic, and increasingly personal.

Read moreJanuary 2026 Newsletter

The U.S. power grid is currently a study in constructive interference, a phenomenon where separate waves meet, their peaks align, and they merge into a single, amplified force. Capacity and generation shortages, the elevation of affordability to the center of politics, community opposition, and concerns about emissions and reliability are powerful dynamics individually. Together they may force long-lasting changes to the U.S. power systems, as proposed wholesale changes across PJM this month illustrate.

Read moreDecember 2025 Newsletter

How 2025 sets the stage for 2026. A review of AI, interconnection reforms, clean power and large load growth.

Read more