BIO

Logan Goldie-Scot is a VP of Strategy at Generate Capital, responsible for guiding our efforts to provide power to large load customers in a grid constrained environment.

Prior to Generate, Logan joined BloombergNEF in 2010 and was Head of Clean Power research when he left in 2022. This was a 30-person group spanning solar, wind, energy storage and power grids. At BloombergNEF he previously worked as a solar analyst, built and led the Energy Storage team, and developed the firm’s first clean energy Index and ETF, in collaboration with Goldman Sachs. Logan is a regular writer, speaker and conference panellist on topics relating to the energy transition. He has an MA (Hons) in Arabic from Edinburgh University and in 2019 completed executive training in Supply Chain Management at Stanford GSB.

Agentic AI, conditional power, criminally cheap gasoline

By the numbers

Of perhaps more lasting value than Olaf from Frozen’s brief cameo during Jensen Huang’s keynote at GTC was his argument that we are at an inflection for inference. “For the first time, you don’t ask AI what, where, when, how. You ask it to create, do, build.” With this comes a step change in compute intensity.

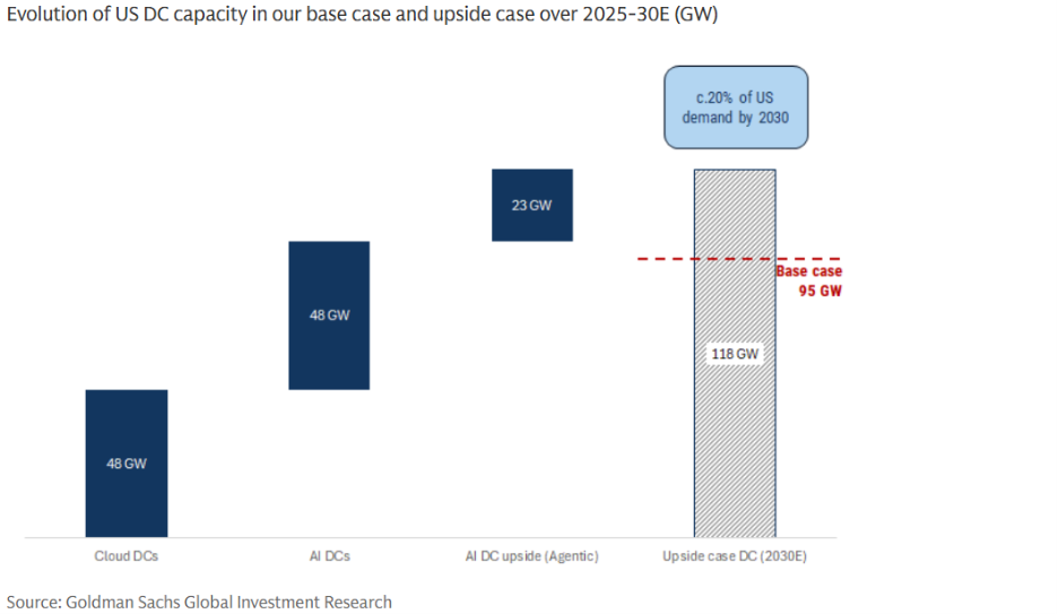

Agentic AI is the clearest expression of this shift and is much more energy-intensive than current chatbots: Goldman Sachs estimates that each ten percentage point increase in agentic queries versus its baseline projections boosts data center needs by roughly 25%.

A shift from training to inference also tilts the balance between capex and recurring usage, “It’s the difference between selling a car and selling gasoline. Training was the car. Inference is the gas. Agentic AI is everyone leaving their engine running 24 hours a day.” (Crazy Stupid Tech).

Blurred lines, conditional service

Reforms are underway across U.S. wholesale markets that will ultimately make it easier for large loads and generators to connect to the grid. In the near term, flexibility and a combination of behind-the-meter and front-of-meter is the clearest path to power. The blurring of lines here necessitates a revised approach from large loads seeking power. As our CEO David Crane wrote in the above Expert View, “Power systems are not binary. The future won’t be purely grid-connected or behind-the-meter. It will be an integration of both, stitched together over time, involving physical infrastructure across multiple markets and differing regulatory frameworks.” Behind-the-meter resources for instance will need to operate in a way that meets the needs of the grid, in contrast to the imagery of a power fortress standing isolated atop a hill. Getting this right requires ongoing and active engagement with regulatory processes to master and inform the proceedings. Let’s take a look at a few examples.

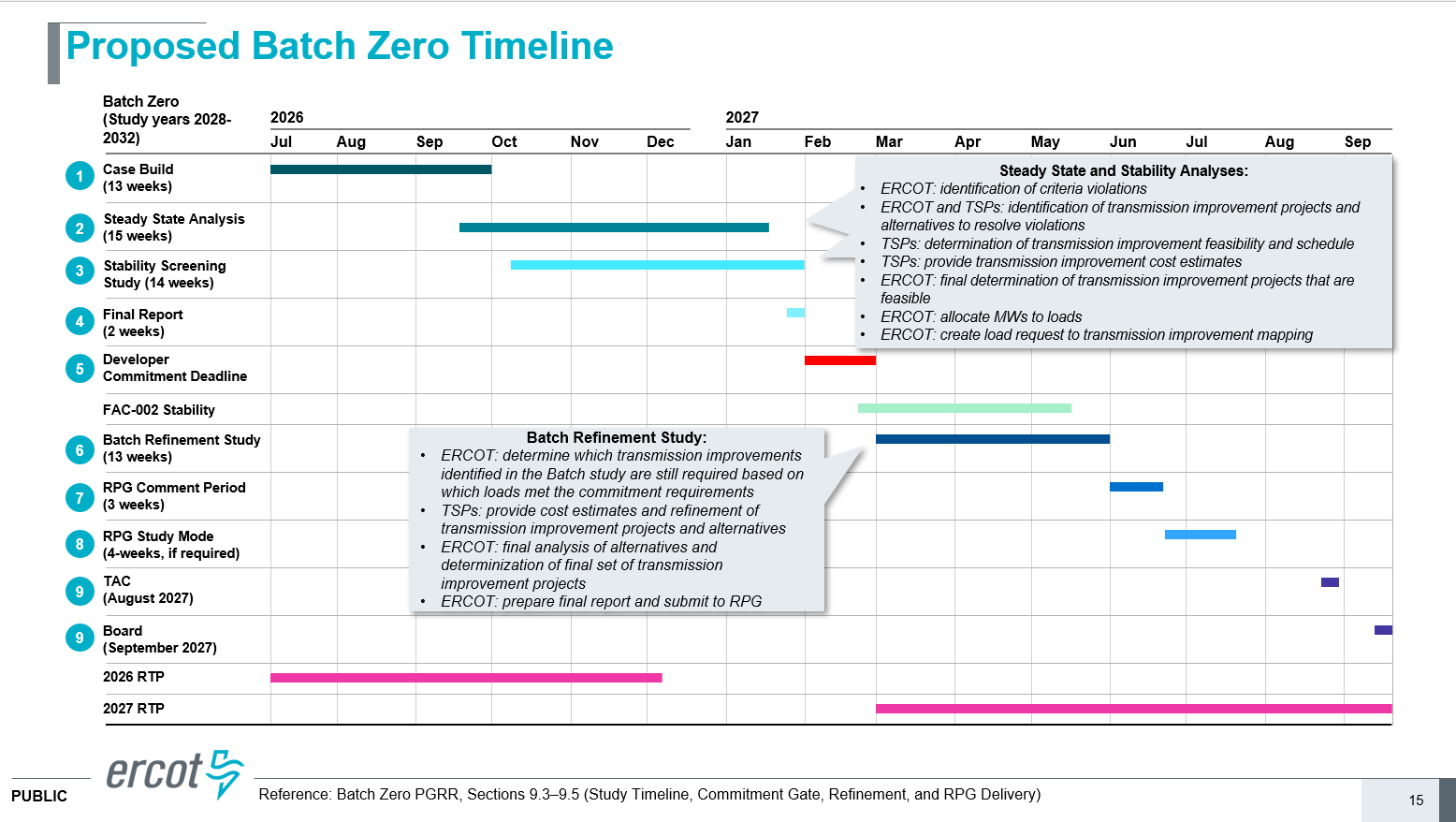

Large load energization approvals in ERCOT have largely ground to a halt ahead of the introduction of its new Batch Zero process, which is due to kick off in July. The exact eligibility criteria for inclusion in Batch Zero are still being developed but ERCOT published proposed rules on March 14th. At a minimum, to be included in Batch Zero for reliability assessment and to be allocated capacity, a project must have signed an intermediate agreement with the relevant utility and have received ERCOT approval of a steady state or stability study by July 24, 2026.

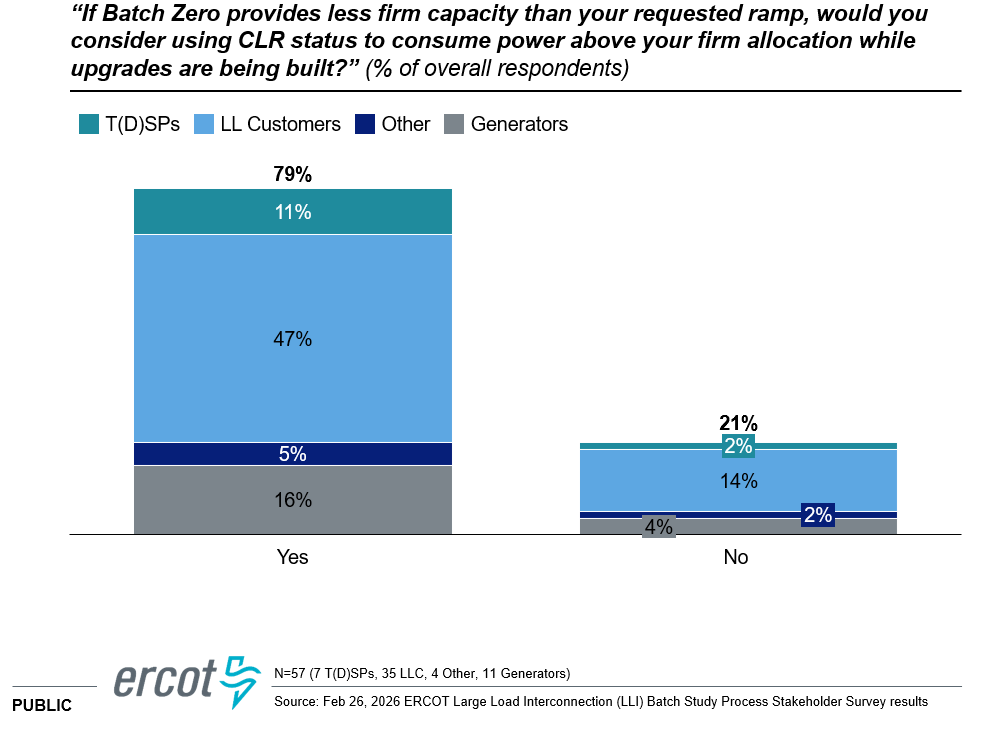

Kicking off this process is a major step forward for ERCOT but the energization date for many projects studied in Batch Zero could still be years away. This opens the door for conditional or non-firm service from the grid. Large loads in ERCOT cannot currently be studied as being curtailable. Instead they must be studied as receiving firm transmission service during grid peak. Under this setup, there is no benefit from a study perspective for a load with a bilateral arrangement with a generator or battery, even if the projects are co-located behind the meter. Changing this requires a mechanism that lets large loads signal to the grid that they can flex. ERCOT’s ‘Controllable Load Resource’ (CLR) is the closest existing construct. It is currently an operational mode that allows load to be dispatched down in real-time by ERCOT to zero or to an agreed upon minimum value. CLR is not though a mode against which a project can be studied. This is hopefully set to change. This would ideally allow the projects to be interconnected sooner because they are taking ‘as available’ transmission service and therefore would not necessitate transmission upgrades. CLR is fairly popular based on feedback presented in a recent ERCOT workshop, where 79% of respondents said they would consider using CLR status if Batch Zero provided less firm capacity than their requested ramp.

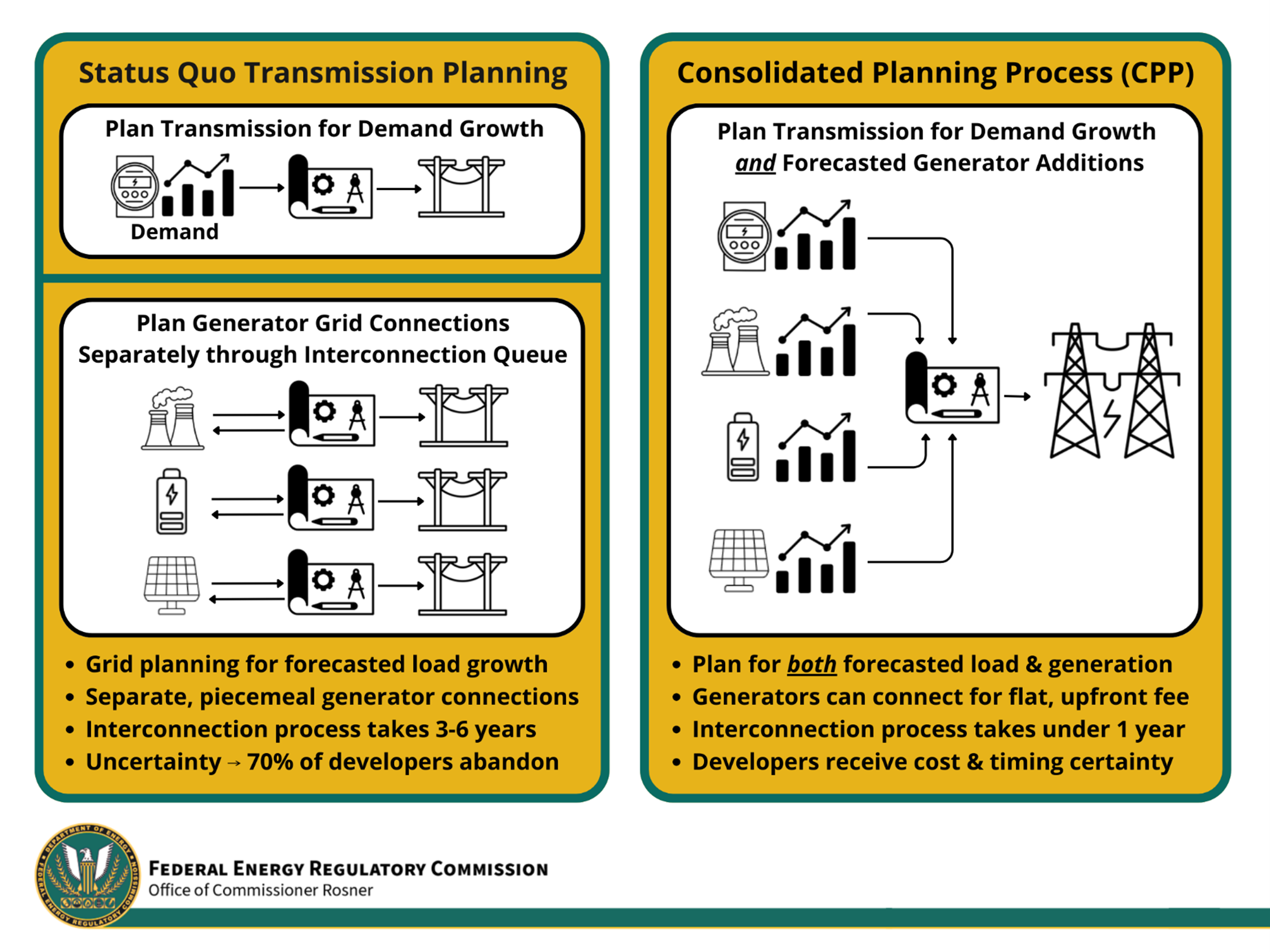

Elsewhere, FERC approved SPP’s Consolidated Planning Process (CPP) earlier this month, which aims to improve cost transparency and reduce study timelines. The CPP will identify areas with available or planned transmission capacity, called Planned Interconnection Locations (PILs), and assign standardized per-megawatt interconnection costs (“Generalized Rates for Interconnection Development Contribution” or GRID-C). These rates reflect network upgrade costs and provide clearer price signals for developers. The first CPP queue window opens in April, with initial rates expected this fall. The process has been lauded by FERC and if successful, flavors of it could be adopted by other markets. SPP is also already beginning Phase 2 CPP planning, which would incorporate large load cost allocation into the combined planning process.

SPP is the first market to really combine transmission planning and generator interconnection into a single process as it strives to overcome the bottlenecks. The approval of CPP is however both a positive step forward and testament that most market solutions have extremely long timelines before you see a loosening of constraints: SPP began working on transmission reform that led to CPP in 2020 (Utility Dive). Hence the need for market participants to take interim measures.

PJM has its own (or several) long lead-time processes. Its Reliability Backstop Procurement (RBP), a one-time special auction designed to ensure sufficient supply, is unlikely to alleviate near-term reliability issues as most chosen projects are unlikely to materially affect system conditions until the early 2030s.

A more immediate, albeit interim, solution is its Connect and Manage framework, which would allow data centers to connect if they accept curtailment before pre-emergency demand response deployment. Analysis in response to the proposal’s release found curtailable data center loads could reduce costs for consumers in the near-term (2028). Data center stakeholders are pushing for optionality via exemptions to connect-and-manage for loads tied to “qualifying new generation” (BYOG).

On March 25, PJM stakeholders approved moving forward with Connect and Manage issue charges, and were presented with the BYOG issue charge. A task force for Connect and Manage will begin March 31 with a final proposal expected by August. BYOG proponents aim to advance their proposal next month, and to have the proposal addressed in advance or at least concurrently to connect and manage to build it out as a feasible alternative.

Many of these proposals are directionally positive, but the details make or break them and the initial pass was not encouraging. Stakeholder filings are of course part of a negotiation, but the feedback was fairly direct: “These proposals will not enable commercially viable arrangements,” the Data Center Coalition said in a filing Wednesday. Or in the words of Jesse Jenkins, ‘PJM must do better.’ (LinkedIn)

Criminally cheap gasoline

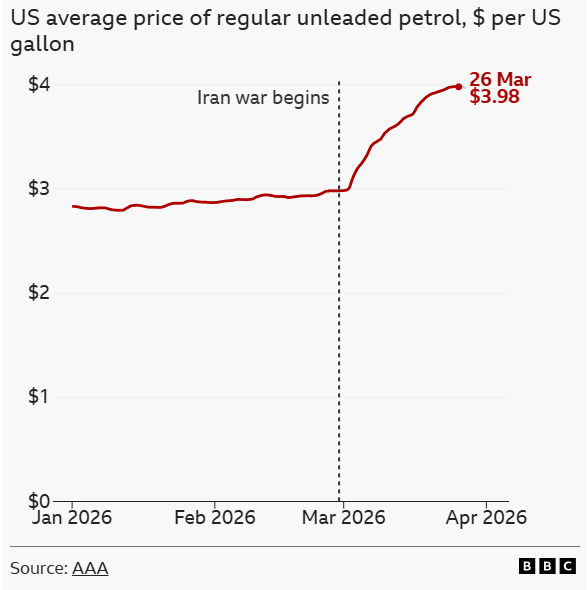

The U.S.-Israeli war on Iran is forcing energy rationing across much of Asia and parts of Europe, driven by the disruption of oil and LNG flows through the Strait of Hormuz. In the U.S., an energy exporter, the impact is narrower, but has pushed gasoline prices up to $4/gallon. As a UK commentator on the Page94 Podcast remarked recently, “American gas prices have gone from insanely cheap to criminally cheap”. Nonetheless, vast expanses, fuel inefficient cars and charged politics ensure that gasoline price spikes are keenly felt. “The salience of the gas price is further pumped up (pun intended) by two features as to how it is marketed and sold. First, I can’t think of another good whose price is broadcast on giant electronic signs on every other American street corner, so that one could not ignore the price if one tried. And second, I can’t think of another product which shows you in real time (at the pump) your wallet draining away as you watch. Painful.” (Car Charts).

Gasoline tends to be a greater share of US wallets than electricity prices. Back in September we wrote “cost of living concerns, in particular electricity prices, are front of mind for many U.S. voters but two issues make it a harder line of attack for Democrats. For starters, the average gasoline price is down 10% in real terms in 2025, which makes up for recent electricity price increases.” (Who to blame for your electricity bill?) The rise of gasoline prices and electricity prices at the same time comes at a poor time for data center developers and hyperscalers as upward pressure on electricity rates is being tied directly to the question of how we power the data center boom and who is going to pay for it.

This further elevates the importance of community engagement for data center companies. As President Trump recently said, ‘They need some PR help’, and they also need partners experienced at working with local communities. Most of the discussions to date have been around rates, water use, jobs and taxes and the like. More recent examples get into the minutiae of how these firms engage with communities, such as Microsoft ending the use of NDAs with local governments in an effort to ‘increase transparency around its data center developments’ (NPM). Just as data center firms need to secure power not just for day one but for the life of these projects, community engagement is not a one-and-done effort. The real work begins after you tie the knot.

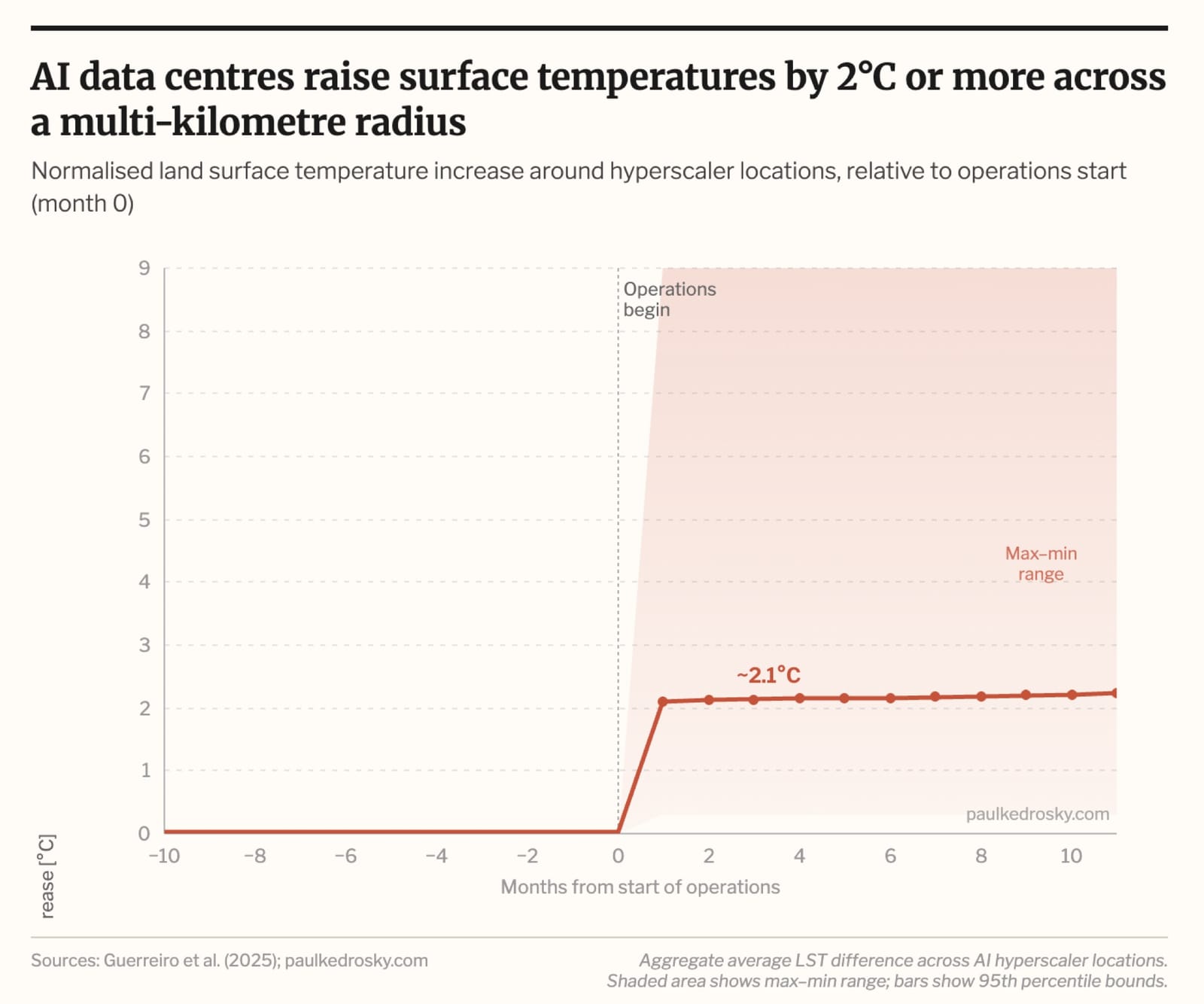

This is especially true as we better understand how data centers affect their local environment. “A new pre-print from researchers at Cambridge, NTU, and NUS uses satellite measurements of temperatures near AI hyperscaler locations worldwide and finds a consistent, sharp effect: within a month of a data centre coming online, land surface temperatures in the surrounding area rise by an average of 2°C and stay there” (Paul Kedrosky). The findings are striking if they hold up.

More newsletters

April 2026 Newsletter

After four years, PJM opened back up its generation interconnection queue. Gas is now the dominant technology in the queue compared to solar back in 2022.

Read moreMarch 2026 Newsletter

Just over four years after the "ChatGPT moment", February 2026 marked a new inflection point for how many of my peers and I use AI. The constraints switched from model limitations (both perceived and real) and perhaps a lack of creativity and prioritization, to token and inference constraints: "Rate Limit Exceeded", "A bit longer, thanks for your patience." Powering a data center now requires simultaneous execution across three domains that each demand deep, specialized expertise: the grid and interconnection, on-site generation, and policy and regulation. And while AI has come in strides, the limits to scaling compute quickly are intransigent. They are physical, geographic, and increasingly personal.

Read moreFebruary 2026 Newsletter

Just over four years after the "ChatGPT moment", February 2026 marked a new inflection point for how many of my peers and I use AI. The constraints switched from model limitations (both perceived and real) and perhaps a lack of creativity and prioritization, to token and inference constraints: "Rate Limit Exceeded", "A bit longer, thanks for your patience." Powering a data center now requires simultaneous execution across three domains that each demand deep, specialized expertise: the grid and interconnection, on-site generation, and policy and regulation. And while AI has come in strides, the limits to scaling compute quickly are intransigent. They are physical, geographic, and increasingly personal.

Read more